A Complete V-SLAM Solution Using Jetson Xaiver NX, VINS-Fusion & Arducam

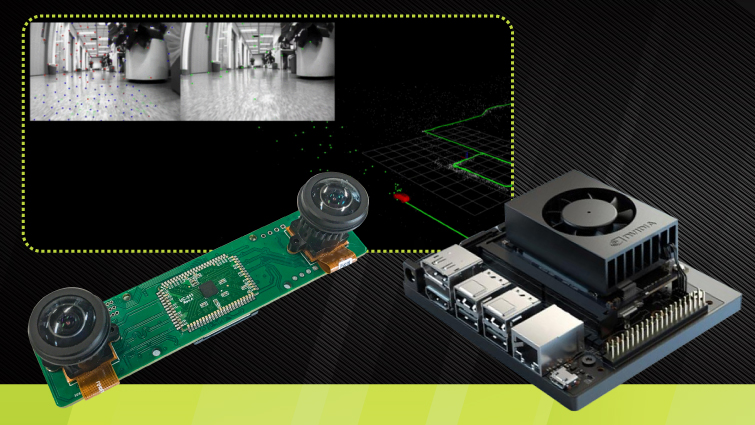

We used Nvidia Jetson Xavier NX, a stereo Camera board featuring two monochrome image sensors with global shutters, and an advanced VI-SLAM algorithm to build an inexpensive V-SLAM system that can be used in a wide selection of mobile robotic applications.

This is also a different approach to implementing stereo visual odometry on embedded platforms.

Hardware

- Jetson Xavier NX Developer Kit

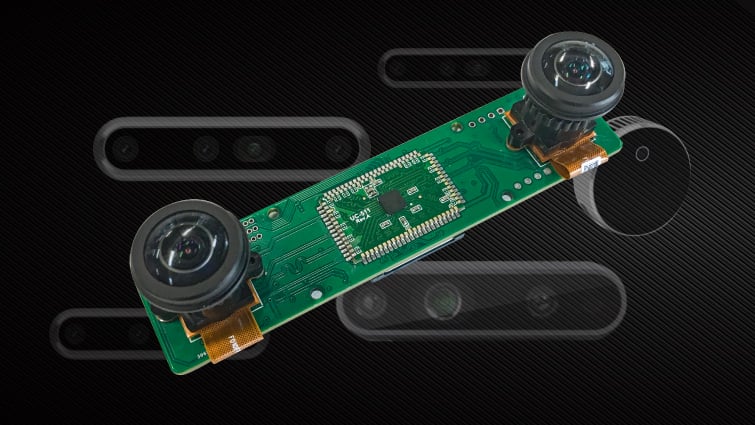

- Arducam Stereo Camera

Software

- Ubuntu 18.04

- CUDA 10.2

- ROS Melodic

- OpenCV 3.4

- igraph

- Eigen3

- Ceres2

How the system works

How to use Arducam stereo camera to perform location with Visual SLAM

1. What is Arducam stereo camera? Stereo vision systems give the robots depth perception skills, which make artificial machines and systems develop an understanding of their environment by estimating the relative distance of objects in their vision from many visual cues. Hence, stereo vision are used in many areas of robotics, such as self-driving cars, […]

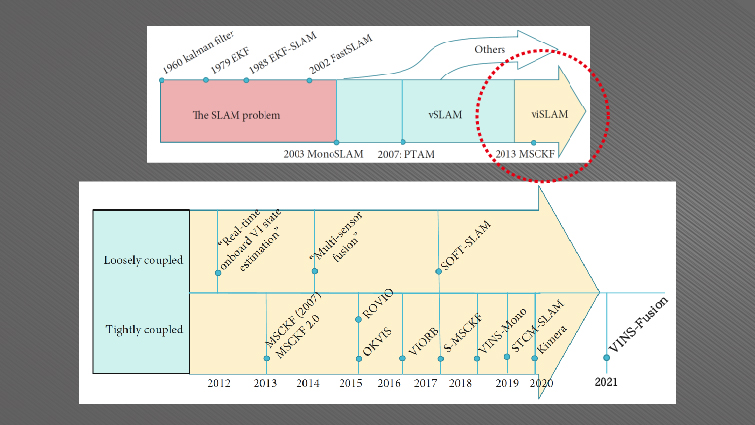

Why we use the open-sourced VINS-Fusion

VINS-Fusion is, per its creator’s own words, “an optimization-based multi-sensor state estimator”. To us and all the SLAM researchers, it’s state-of-the-art, and one of the best-performing VI-SLAM algorithms that can be used to design adaptable SLAM systems for mapping and localization in complex scenarios (large scale/outdoor/low textured/etc. environments). It’s an upgraded version of VINS-Mono and is made more flexible with new features like multiple-sensor support, online spatial/temporal calibration, and visual loop closure.

Cost-Effective Hardware for Visual Simultaneous Localization & Mapping

In the early days of SLAM, if a robot needed to find out where it was and what its surrounding environments looked like, the only options were sonar sensors and laser scanners, these low-level perception methods were far from perfect, and it resulted in the derived V-SLAM becoming mainstream, and video sensors becoming widely adopted.

Along with the development of visual based SLAM and its algorithms, hardware has also been diversified, from low-cost monocular cameras to the high-end RGB-D vision modules, the precision and efficiency of V-SLAM systems are hugely improved.

Does this mean that a depth or a ToF (time of flight) camera can provide the most accurate results? To some extent, yes. However, their absurd prices have made them a no-go from being massively commoditized, they are destined to only serve research papers and algorithmic studies.

Our V-SLAM proposal, a stereo vision camera coupled with Nvidia’s second most powerful embedded platform, is the perfect balance between cost and performance, it’s currently honed for drift-free, localization-centric SLAM applications. And we will also bring dense 3D mapping and reconstruction through future updates.

V-SLAM for Robotic Vision, Virtual/Augmented Reality and Autonomous Navigation

V-SLAM has always played a big part in the advancement of modern robotic vision technologies, various algorithms and hardware have helped us getting better and better at feature extraction, matching, and refinement, well-developed products like robot vacuums and self-navigating drones are already helping us in our daily lives.

It’s also shaping the future of VR/AR and autonomous driving, these are all booming markets eagerly waiting for affordable hardware solutions.

Upgrading to VI-SLAM for Higher Accuracy

We can easily turn our V-SLAM solution into a full-fledged VI-SLAM system by simply adding an additional inertial measurement unit (IMU) to our existing stereo cameras, or by revamping them with stereo RGB sensors with depth modules or extra VPUs, eventually we will be able to offer more sophisticated VI-SLAM solutions with higher accuracy through optimized input search, pose tracking, mapping and loop closing.